Workspace-Bench 1.0

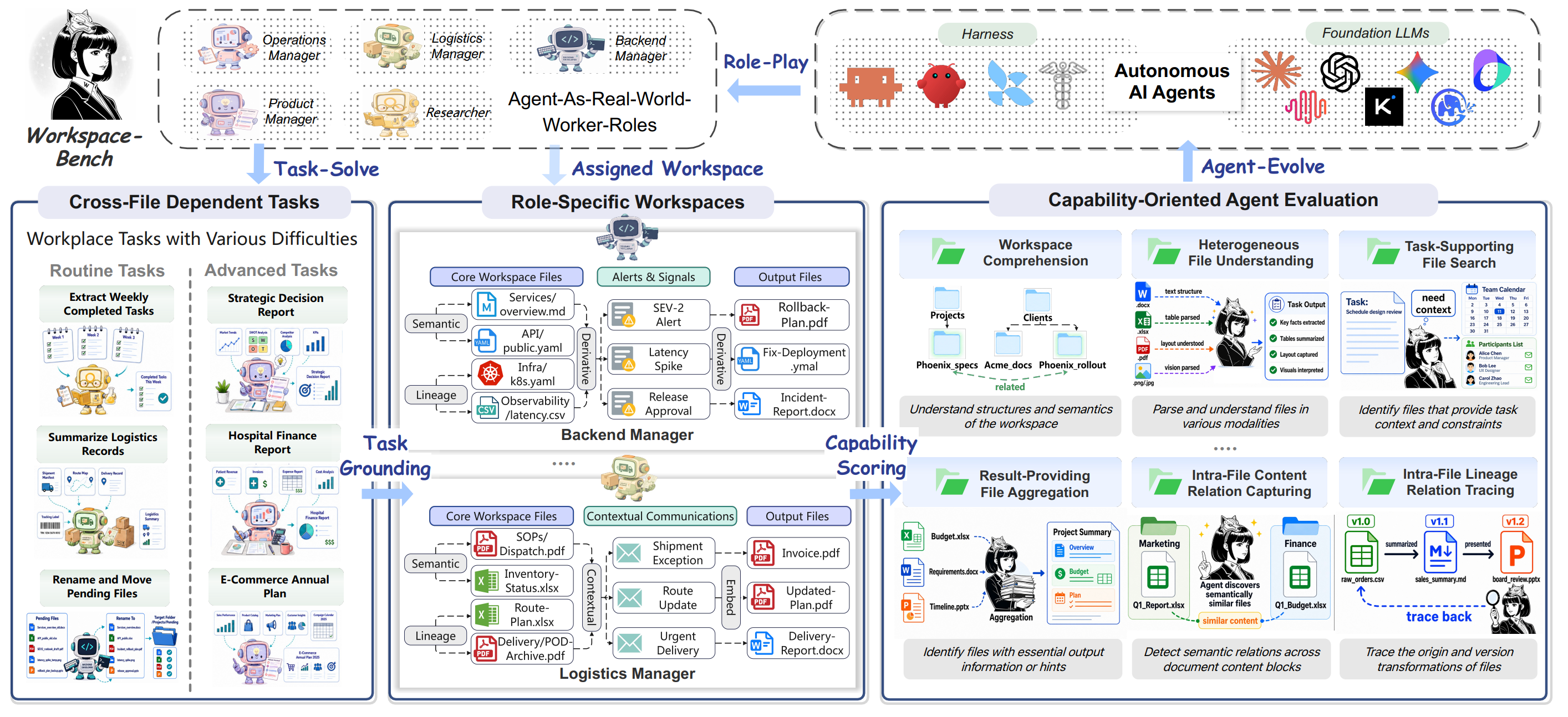

Workspace-Bench evaluates how well AI agents operate in realistic workspaces with large-scale file dependencies. It measures whether agents can discover relevant files, reason across implicit context, modify artifacts safely, and deliver the right output under rubric-based evaluation.

Workspace-Bench highlights

Independent benchmark summaries for workspace agents, model backbones, and benchmark composition.

Human reference remains ahead

Workspace-Bench reports a clear gap between human performance and current evaluated agents.

Latest benchmark updates

Major milestones and feature additions since the benchmark's public launch.

Benchmark release

Workspace-Bench and Workspace-Bench-Lite were released together with the public repository, benchmark structure, task metadata, rubrics, and file-dependency information.

arXiv paper

The official paper introduced the benchmark design, workspace-learning evaluation dimensions, scoring protocol, Lite split, and public leaderboard framing.

Public leaderboard

The public Lite leaderboard was released with agent/model rankings, rubric pass rates, threshold markers, and distribution figures aligned with the released benchmark artifacts.

Explore Workspace-Bench

Jump into the official leaderboard, dataset composition, and scoring methodology from a single entry section.

Official rankings

Browse public Lite systems, full-summary rows, threshold views, and capability-oriented leaderboard analysis.

Task composition

Inspect worker profiles, collaboration types, rubric distributions, file dependency coverage, and task metadata structure.

Evaluation protocol

Review the rubric-based scoring process, submission requirements, and how benchmark evidence is structured.

Framework

The official Workspace-Bench framework figure summarizes how role-specific workspaces, benchmark tasks, agent execution, and capability-oriented evaluation fit together.

What Workspace-Bench Measures

The benchmark focuses on workspace learning: the ability to identify explicit and implicit file dependencies, integrate context across artifacts, choose the right actions, and produce dependable deliverables in large workspaces.

Workspace Exploration

Navigating deeply nested directory structures and identifying relevant files from noisy candidates.

Task-Supporting Files Utilization

Finding files that provide essential context, references, and domain knowledge needed to complete a task.

Result-Providing Files Utilization

Aggregating result files that contain required outputs, formats, and baseline information.

Content Relations Understanding

Tracing explicit references, semantic connections, and contextual links between related documents.

Semantic Heterogeneous File Understanding

Connecting information across diverse modalities including documents, spreadsheets, presentations, and code.

Lineage Tracing

Understanding file versions, revisions, and derivation relationships (e.g., report_v1, report_final).

Current Performance Gap

Workspace-Bench exposes a large gap between human performance and the best AI agents. The gap grows in profiles with more hidden dependencies, denser file graphs, and stricter rubric requirements.

Resources

Use the benchmark website as a compact index to the paper, repository, dataset composition, and submission process.

Leaderboard

Browse official scores, cost comparisons, and capability views.

Dataset Visualizations

Inspect task composition, file types, workspace sizes, and profile distributions.

Methodology

Read the workspace learning definition, scoring rules, and reproducibility protocol.

Representative Tasks

See concrete examples of hidden dependencies and rubric-based grading.